#dynamodb# aws# database# nosql

DynamoDB Joins: A Comprehensive Guide

Lakindu Hewawasam

March 21, 2026

5 min read

DynamoDB Joins: A Comprehensive Guide

DynamoDB does not support traditional join operations. That's not a limitation - it's a deliberate architectural choice. Joins are resource-intensive queries that do not scale well as your database grows, and DynamoDB is built around the principle that every query should execute in predictable, low-latency time regardless of data volume.

So how do you model relational data in DynamoDB? The answer is single table design.

Why DynamoDB Doesn't Have Joins

In a relational database, a join combines rows from two or more tables based on a related column. It's flexible and expressive, but it comes at a cost: the database engine has to scan, sort, and merge data across potentially large datasets at query time.

DynamoDB trades that flexibility for performance. It stores data in a way that makes individual lookups and access patterns extremely fast, but it requires you to think about your access patterns upfront when designing your schema.

Single Table Design

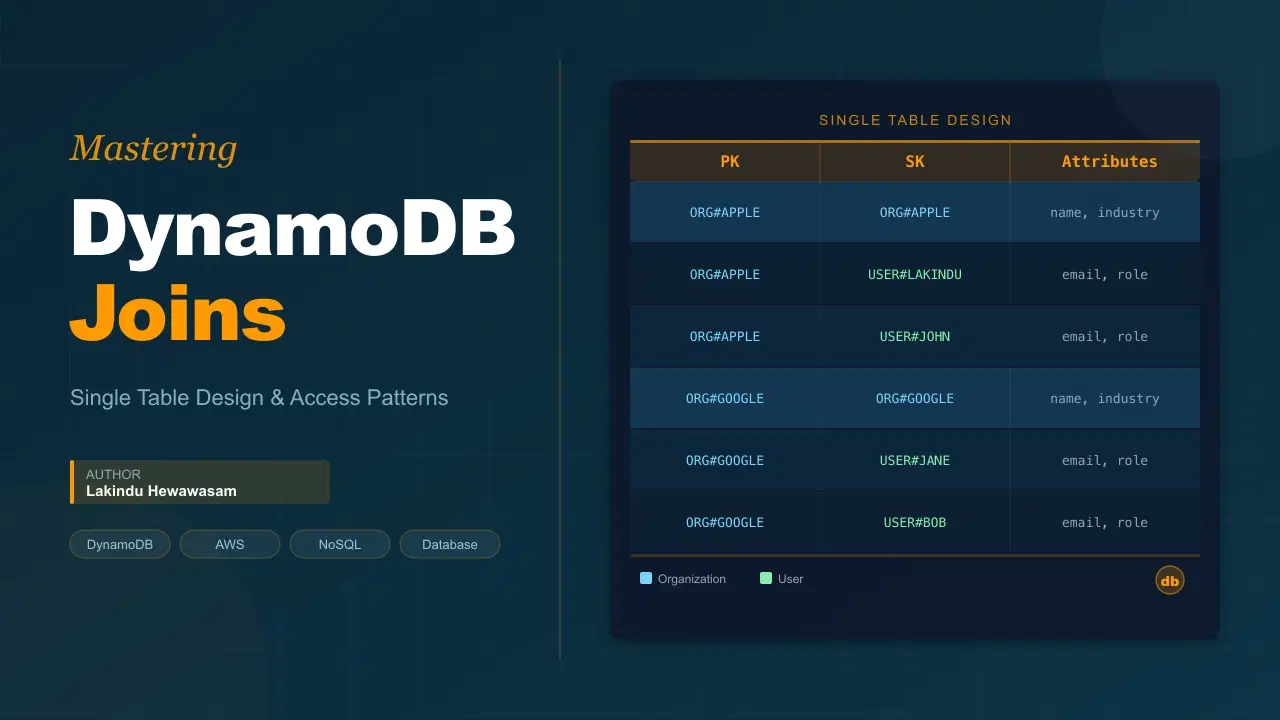

The most common pattern for modeling relational data in DynamoDB is single table design - storing all related entities in one table using composite primary keys.

Instead of having separate tables for organizations and users and then joining them, you store both in the same table with a structure like:

| PK | SK | Attributes |

|---|---|---|

ORG#APPLE | ORG#APPLE | name, industry, createdAt |

ORG#APPLE | USER#LAKINDU | email, role, joinedAt |

ORG#APPLE | USER#JOHN | email, role, joinedAt |

ORG#GOOGLE | ORG#GOOGLE | name, industry, createdAt |

ORG#GOOGLE | USER#JANE | email, role, joinedAt |

Key Design Principles

1. Generic Key Naming

Use generic attribute names like PK and SK instead of entity-specific names like orgId or userId. This allows the same table to store multiple entity types without conflicting schema.

2. Prefixed Values

Use prefixes to distinguish entity types within the same key namespace:

ORG#APPLE- an organisation recordUSER#LAKINDU- a user record

This makes it easy to filter by entity type using DynamoDB's begins_with() condition.

3. Composite Sort Keys

The sort key lets you store and retrieve multiple related items under a single partition key. All users belonging to ORG#APPLE share the same partition key, which means they're stored together and can be fetched in a single query.

Querying Related Data

To fetch all users in an organisation, you issue a single Query call:

const { DynamoDBClient, QueryCommand } = require("@aws-sdk/client-dynamodb");

const client = new DynamoDBClient({ region: "us-east-1" });

const result = await client.send(new QueryCommand({

TableName: "MyTable",

KeyConditionExpression: "PK = :pk AND begins_with(SK, :skPrefix)",

ExpressionAttributeValues: {

":pk": { S: "ORG#APPLE" },

":skPrefix": { S: "USER#" }

}

}));

This returns all user records under ORG#APPLE in a single API call - no join required.

To fetch the organisation record itself alongside its users, remove the begins_with condition or query without the SK filter:

const result = await client.send(new QueryCommand({

TableName: "MyTable",

KeyConditionExpression: "PK = :pk",

ExpressionAttributeValues: {

":pk": { S: "ORG#APPLE" }

}

}));

This returns both the ORG#APPLE record and all associated USER# records in one call.

Performance Advantages

Single table design outperforms SQL joins in a DynamoDB context because:

- Fewer API calls - related data is retrieved in a single

Query, not across multipleGetItemor join operations. - Collocated storage - items sharing a partition key are stored together on the same partition, making reads faster and cheaper.

- Predictable latency - DynamoDB's query model guarantees single-digit millisecond performance regardless of dataset size, which a cross-table join cannot provide.

Many-to-Many Relationships: Adjacency List Pattern

For many-to-many relationships (e.g., users belonging to multiple organisations), DynamoDB recommends the Adjacency List Design Pattern.

Each relationship is stored as its own item:

| PK | SK |

|---|---|

USER#LAKINDU | ORG#APPLE |

USER#LAKINDU | ORG#STRIPE |

ORG#APPLE | USER#LAKINDU |

ORG#APPLE | USER#JOHN |

This stores the relationship from both sides, allowing you to query in either direction:

- All orgs a user belongs to → query by

PK = USER#LAKINDU - All users in an org → query by

PK = ORG#APPLE

The trade-off is write duplication: every relationship is written twice. This is a deliberate choice - DynamoDB optimises for reads, and the write overhead is predictable.

When Single Table Design Gets Complex

Single table design requires you to know your access patterns before building. If your access patterns change significantly, restructuring a single table is more painful than restructuring multiple smaller tables.

For teams just starting out or for simpler domains, using multiple tables is perfectly valid. The performance gap only becomes meaningful at scale, and the added design complexity of single table design isn't always worth it early on.

Summary

DynamoDB doesn't have joins because joins don't scale. Instead, it gives you the tools to model related data in a way that keeps queries fast and predictable:

- Use single table design to colocate related entities

- Use composite primary keys (

PK+SK) with prefixed values to distinguish entity types - Use

begins_with()in sort key conditions to filter by entity type - Use the Adjacency List Pattern for many-to-many relationships

The mental shift from relational thinking to DynamoDB thinking takes time. But once it clicks, you'll find that most access patterns you'd reach for a join to solve can be answered in a single, fast query.

Further reading: DynamoDB Developer Guide - Best Practices for NoSQL